LLM Memory in 2026: Why Most Benchmark Claims Don't Survive Scrutiny

LLM Memory in 2026: Why Most Benchmark Claims Don’t Survive Scrutiny

The race to build AI systems that “remember” their users has produced a flurry of viral benchmark claims, several commercial products charging between $19 and $249 per month, and a wave of confusion about what is actually working. A widely shared open-source project recently hit 5,400 GitHub stars in under 24 hours on the strength of a 96.6% recall score, only to have a detailed methodological critique poke serious holes in the claim within days.

For organizations evaluating AI memory layers, whether as a buyer choosing between vendors or as a builder rolling their own, the gap between headline numbers and shippable behavior matters. This article unpacks how LLM memory actually works, why the benchmarks can mislead, and what an honest evaluation looks like, drawn from a head-to-head benchmark run against the system powering Tizenegy, an AI companion platform built on Cloudflare’s edge stack.

Why Memory Is the Hardest Problem in Conversational AI

Modern frontier models such as Claude Opus 4.7 and GPT-5.4 ship with roughly one million tokens of context. That sounds enormous until the math is run on a real product: six months of daily conversation between a user and a companion easily exceeds the window. Stuffing more context in does not scale, both because of cost and because retrieval quality degrades as the haystack grows.

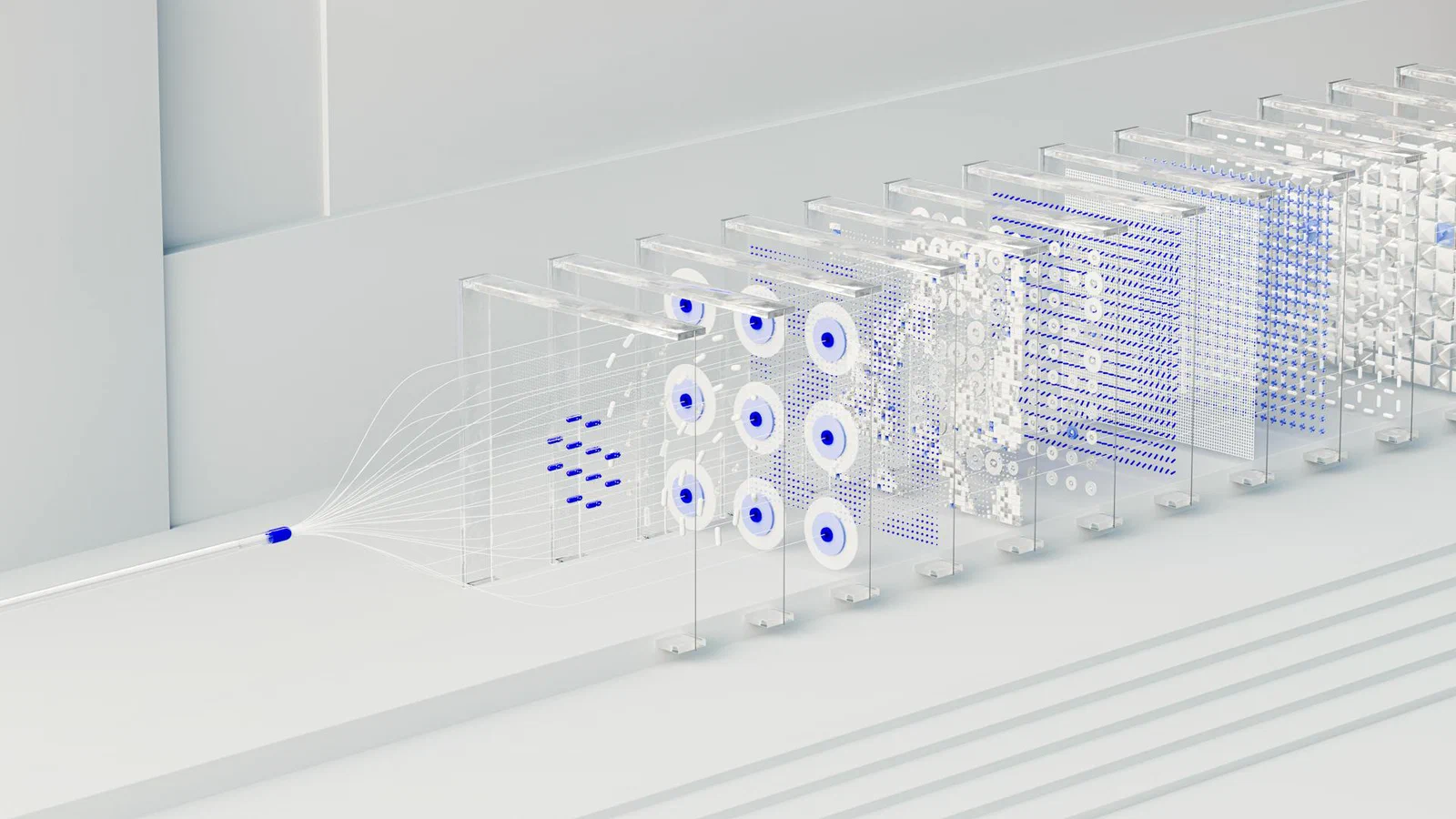

The standard architecture is well understood:

- After each conversation turn, important content is extracted (facts, preferences, events).

- The extracted content is embedded as vectors and stored in a vector database.

- When a new message arrives, the system retrieves the most relevant memories and injects them into the prompt.

The challenge is not the diagram. The challenge is that every step of the diagram has half a dozen design choices, each of which moves the benchmark needle by a few percentage points and the user-perceived quality by considerably more.

A Real Production System

Tizenegy runs its memory layer on Cloudflare Workers, with D1 (SQLite) for relational data and Vectorize for similarity search. The pipeline has four stages:

- Asynchronous extraction. After every message pair, a queue consumer runs a cheap LLM (Llama 3.1 8B) to pull out entities, facts, preferences, and a one-to-two sentence summary. The chat itself never waits on this step.

- Contradiction detection. When a fact changes (a user moves from Google to Meta, switches partners, adopts a pet), the old fact is invalidated with a timestamp and the new one supersedes it. The companion does not awkwardly ask about a job the user left.

- Semantic embedding. Memories are embedded with

bge-base-en-v1.5at 768 dimensions, namespaced per user, and filtered by companion. - Composite ranking. At retrieval time, the score is 50% semantic similarity, 30% recency (with a six-day half-life), and 20% importance. A moderately similar recent memory can outrank a more semantically similar old one, which mirrors how human memory tends to prioritize.

The asynchronous design is the unsung hero. Users never feel memory extraction in their chat latency, which is what makes the system shippable.

The MemPalace Phenomenon

In early April 2026, an open-source project called MemPalace went viral. Created by actress Milla Jovovich together with Ben Sigman, the repository accumulated 5,400 GitHub stars in under 24 hours and reached more than 15 million people. The headline claim: 96.6% recall on LongMemEval, a benchmark from an ICLR 2025 paper, with zero API calls and only local vector storage.

The architectural pitch was genuinely interesting. Borrowing from the ancient Method of Loci, MemPalace organizes memories into a “palace”:

- Wings (people or projects)

- Rooms (topics)

- Halls (memory types such as facts, preferences, events)

Rather than searching the entire memory store, the system searches the relevant wing and room first. The team reported a 34% retrieval boost over flat search from this metadata-filtered approach. They also chose to store everything verbatim, arguing that LLM-generated summaries waste tokens and lose information that the embedding model could otherwise exploit.

When the Benchmarks Don’t Hold Up

Then came a detailed technical analysis that picked the methodology apart. The critique landed several blows:

The 100% LoCoMo score was an artifact. LoCoMo conversations max out

at 32 sessions. MemPalace ran retrieval with top_k=50, returning more

items than the dataset contained. That is not retrieval; it is just

returning everything.

The 96.6% LongMemEval number measures retrieval, not answers. The metric checks whether the right session appears in the top-five search results. It does not check whether the system answers the question correctly. No answer generation, no judge evaluation: a retrieval system can find the right session and still produce a wrong answer.

The “knowledge graph” claim did not match the code. The marketing

described contradiction detection. The actual knowledge_graph.py

implements exact-match triple deduplication, which is a long way from

temporal fact management.

The compression format regressed quality. MemPalace’s own documentation reports that its AAAK compression dialect scores 84.2% versus 96.6% for raw storage, a 12.4 percentage-point drop. At small scale, the compressed form actually uses more tokens than raw text.

The internal BENCHMARKS.md file in the repository was honest about

most of these caveats. The issue was the gap between that internal

documentation and the headline claims circulating on social media.

Running the Benchmarks Honestly

Reading the critique, two questions sat unanswered for any production team:

- How does a real, running system actually score?

- Does the palace structure help, hurt, or wash out?

The answer required setting up LongMemEval-S (500 questions across six categories: single-session facts, multi-session reasoning, temporal questions, knowledge updates, preferences, and abstention tests) with roughly 53 conversation sessions per question as the search corpus.

Results with bge-base-en-v1.5 (production model)

| Mode | Recall@5 | Recall@10 | NDCG@10 |

|---|---|---|---|

| Raw (baseline) | 96.0% | 97.6% | 91.3% |

| Extracted (with full LLM pipeline) | 96.0% | 97.6% | 91.3% |

| Palace (room-filtered) | 94.6% | 96.2% | 90.0% |

Results with all-MiniLM-L6-v2 (MemPalace’s model)

| Mode | Recall@5 | Recall@10 | NDCG@10 |

|---|---|---|---|

| Raw | 73.6% | 73.6% | 71.2% |

| Palace (room-filtered) | 72.4% | 72.4% | 70.1% |

How Other Memory Systems Compare

| System | R@5 | R@10 | Notes |

|---|---|---|---|

| Tizenegy (bge-base, raw) | 96.0% | 97.6% | Production baseline, zero API calls |

| MemPalace (claimed, MiniLM) | 96.6% | 98.2% | Not reproducible in independent tests |

| Mem0 | ~85% | — | $19-249/mo cloud service |

| Zep | ~85% | — | $25/mo+, Neo4j-backed |

| Mastra (GPT-based) | 94.9% | — | Requires GPT API calls |

| Supermemory ASMR | ~99% | — | API required |

The numbers come from different runs with different methodologies, so strict comparison is dangerous. That caveat is itself the point: numbers without methodology are marketing.

Five Findings That Matter for Buyers and Builders

1. The Embedding Model Is the Single Biggest Lever

bge-base-en-v1.5 scores 96.0% where MiniLM scores 73.6%, a 22-percentage-point gap. No other architectural decision in the entire benchmark moves the number nearly that much. For organizations evaluating vendors, the first question is which embedding model they use, and the second is whether they let customers swap it.

2. LLM Extraction Does Not Improve Retrieval

The “extracted” mode (a full pipeline with entity, fact, and summary extraction) scores identically to raw embedding. Extraction earns its keep through the structured data it produces (facts, entities, contradiction detection), which feed the prompt, but it does not help the search step. Vendors that justify higher prices on the basis of “smarter extraction improving retrieval” deserve scrutiny.

3. Metadata Filtering Costs a Few Points

Palace mode loses about 1.3 percentage points to flat search. Keyword- based room detection occasionally misclassifies, and the fallback to unfiltered search recovers some but not all of the loss. The trade-off is real; whether it is acceptable depends on what the structure buys elsewhere in the system.

4. Viral Benchmark Numbers Need Independent Replication

MemPalace’s claimed 96.6% with MiniLM was not reproducible: the same

model, same dataset, and honest methodology returns 73.6%. The

discrepancy is most likely explained by inflated top_k, different

chunking, and pure cosine ranking without composite scoring. The lesson

generalizes: benchmark numbers in marketing materials should be

treated as hypotheses, not facts.

5. Retrieval Recall Is Necessary But Not Sufficient

A companion product that loads a hundred-token identity card on session start (“works in tech, has a sister named Emma, recently went through a breakup, loves hiking”) feels qualitatively different from one that starts cold and hopes vector search returns something useful. That difference does not show up in retrieval recall. Optimizing exclusively for the benchmark misses the product.

What This Means for Procurement and Engineering

For organizations evaluating an AI memory layer, the practical takeaways are:

- Ask for the embedding model and dimension count. This is the highest-leverage technical detail.

- Ask whether the benchmark used answer generation or only retrieval. Retrieval-only numbers do not predict user-facing quality.

- Ask for

top_kvalues and corpus sizes. Atop_klarger than the corpus is not a benchmark; it is a tautology. - Ask for a methodology document. If the vendor cannot produce one, the benchmark is unverified.

- Run the system on representative internal data before signing. Public benchmarks measure public datasets, not the workload that will be paid for.

For teams building their own memory layer, the recommendation is shorter: pick the best embedding model, store raw text, treat extraction as a feature for prompt enrichment rather than retrieval, and A/B test palace-style structuring against flat search with real users. The benchmarks will not settle the question alone.

The Bigger Picture

The AI memory space is currently optimizing for retrieval benchmarks while under-investing in what makes a system feel like it knows the user. Recall measures whether the right memory exists in the search results. It does not measure whether the system uses that memory well, whether it contradicts itself about a job, whether it remembers a sister’s name, or whether it notices that the user has been stressed lately.

Those are the qualities that determine whether a companion product is worth paying for, and they are exactly the qualities that public benchmarks fail to capture. Tizenegy is currently A/B testing the palace structure against flat retrieval with real users on the assumption that a small recall hit, in exchange for better-structured prompts, is the right trade for a long-running relationship product. Whether that bet pays off will be settled by user behavior, not LongMemEval scores.

For any organization shipping AI features in 2026, the meta-lesson is the same: read the methodology before the headline number, and measure the things that actually shape the user experience.

Early access to Tizenegy is available on a free beta basis at the project’s landing page.

About the Author

Maya Patel

Cybersecurity Expert

Cybersecurity expert and former IT director with deep expertise in threat analysis and security architecture. Maya brings 15 years of hands-on experience protecting enterprise systems from evolving cyber threats.